data breaches

New Botnet Era: PolarEdge, GeoServer Exploits, and Gayfemboy Malware

ORB-style relay networks, SDK-based bandwidth theft, and Mirai spin-offs fuel a new wave of silent monetization and stealthy ops

Excerpt (40–60 words)

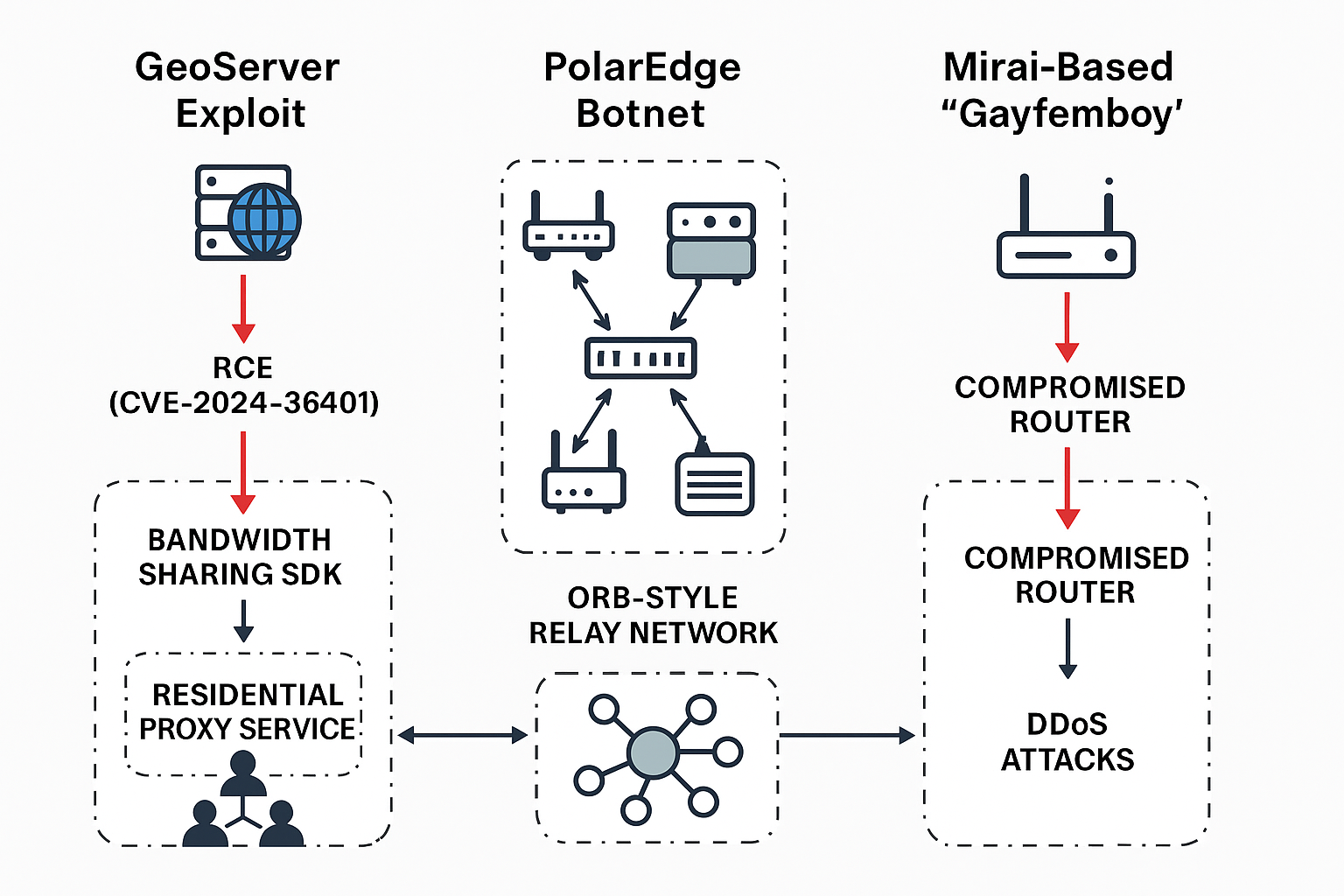

Attackers are chaining a critical GeoServer RCE with novel monetization tactics and ORB-like botnets to quietly profit and persist. New research details SDK-based bandwidth resale on compromised GeoServer hosts, a ballooning PolarEdge ORB built on edge devices, and a resurfaced Mirai variant dubbed “Gayfemboy” hitting routers and gateways worldwide.

Cybercriminals are pushing beyond smash-and-grab botnets, stitching together stealth monetization and covert relay infrastructure: Unit 42 warns of GeoServer systems hijacked to run “passive-income” SDKs that sell victims’ bandwidth, while researchers say the PolarEdge botnet now resembles an Operational Relay Box (ORB) network across tens of thousands of edge devices. Meanwhile, Fortinet tracked a renewed global surge of the Mirai-based “Gayfemboy” malware exploiting SOHO and enterprise gear.

What’s New

- GeoServer RCE monetized, not mined. A campaign exploits CVE-2024-36401 (CVSS 9.8) in OSGeo GeoServer/GeoTools to quietly deploy legitimate-looking SDKs and apps that resell the host’s bandwidth via residential-proxy services—no miner needed, minimal CPU, long dwell time. Unit 42 observed internet-wide probing since March 2025 and over 7,100 exposed GeoServers across 99 countries.

- PolarEdge balloons into an ORB. Censys and prior Sekoia work describe PolarEdge, a TLS-backdoored botnet abusing Cisco/ASUS/QNAP/Synology and other edge devices since mid-2023. Recent tallies show ~40,000 active devices, heavily concentrated in South Korea and the U.S., behaving like an Operational Relay Box network rather than a typical DDoS herd.

- ‘Gayfemboy’ returns with broader exploits. Fortinet details a Mirai-lineage campaign (“Gayfemboy”) adding fresh N-days against DrayTek, TP-Link, Raisecom and Cisco to regain footholds and stage DDoS capability, with targets spanning manufacturing, tech and media across multiple regions.

“Criminals have used the vulnerability to deploy legitimate software development kits (SDKs) … to gain passive income via network sharing or residential proxies.” — Unit 42, Palo Alto Networks. Unit 42

“ORBs are compromised exit nodes that forward traffic … while the device continues to operate normally, making detection … unlikely.” — Himaja Motheram, Censys. The Hacker News

“While Gayfemboy inherits structural elements from Mirai, it introduces notable modifications that enhance both its complexity and ability to evade detection.” — Vincent Li, Fortinet. Fortinet

“Defenders must treat exposed GeoServer and orphaned edge gear as high-risk egress points. Patch fast, kill default services, and watch for quiet bandwidth drains and high, odd-port TLS beacons—these are today’s telltales of ORB-style operations.” — El Mostafa Ouchen, cybersecurity author and analyst.

Technical Analysis

1) GeoServer CVE-2024-36401 attack chain

- Vuln: Unsafe evaluation of property names as XPath (via GeoTools → Apache Commons JXPath) enables unauthenticated RCE across WFS/WMS/WPS request paths. Fixed in 2.22.6 / 2.23.6 / 2.24.4 / 2.25.2; workaround removes

gt-complex-*.jar. - Observed TTPs (Unit 42):

- Initial access: Crafted WFS/WMS payloads (e.g.,

GetPropertyValue) to executeRuntime.exec()on target. - Staging: Payloads fetched from attacker-hosted transfer.sh instances; executables written in Dart interact with legit bandwidth-sharing services.

- Objective: Stealth monetization via residential proxy SDKs; minimal resource use, long persistence.

- Initial access: Crafted WFS/WMS payloads (e.g.,

2) PolarEdge ORB characteristics

- Initial footholds: N-days including CVE-2023-20118 on EoL Cisco RV routers; later broadened to ASUS/NAS/IP cameras, with a TLS backdoor (Mbed TLS/PolarSSL) deployed via FTP/scripted droppers (“q”, “t.tar”, “cipher_log”).

- C2 & stealth: Backdoor listens on high, non-standard TCP ports (40k–50k); log cleanup and persistence; ~40,000 active nodes as of Aug. 2025.

- Use case: Operational Relay Box—stable residential/ISP space used to proxy follow-on intrusions and mask origin.

3) ‘Gayfemboy’ Mirai variant

- Exploits & targets: Recent activity against DrayTek, TP-Link, Raisecom, Cisco; multi-arch binaries (ARM/AArch64/MIPS/PPC/x86), anti-analysis (UPX header tweaks), watchdog/monitor/persistence, and DDoS modules over UDP/TCP/ICMP.

MITRE ATT&CK (selected)

- Initial Access: Exploit Public-Facing App (T1190).

- Execution: Command & Scripting Interpreter (T1059); Native API (T1106).

- Persistence: Scheduled Task/Cron (T1053.003).

- Defense Evasion: Modify system utilities / masquerade; Impair defenses (T1562).

- Discovery: Query process/file system (T1082/T1083).

- C2: Application layer over TLS/Web protocols (T1071.001).

- Resource Development/Monetization: T1583.006 (Acquire network infrastructure / proxies), abuse of SDKs for bandwidth resale (campaign-specific).

(Technique IDs mapped from ATT&CK Enterprise matrix; exact subtechniques may vary per host/device.)

Impact & Response

- Who’s affected:

- GeoServer operators (public-facing instances prior to patched versions).

- ISPs/enterprises with legacy SOHO routers, NAS, IP cameras, VoIP phones and edge gateways running vulnerable firmware.

- Sectors: Manufacturing, tech, construction, media/communications; global spread (notably South Korea, U.S., parts of Europe).

- Actions taken / guidance:

- Patch/mitigate GeoServer immediately to 2.22.6/2.23.6/2.24.4/2.25.2+; if constrained, remove

gt-complex-*.jar(functional impact possible). - Hunt for SDK monetization artifacts (Dart executables, transfer.sh downloads, suspicious cron entries), anomalous egress/bandwidth spikes, and residential-proxy traffic.

- Edge device triage: Disable WAN admin, block management ports, update firmware, rotate creds, and monitor for high random ports (40–50k) with TLS beacons tied to Mbed TLS backdoors.

- Patch/mitigate GeoServer immediately to 2.22.6/2.23.6/2.24.4/2.25.2+; if constrained, remove

- Regulatory/Legal: Organizations running abused infrastructure risk AUP violations with ISPs, potential data-protection exposure if relayed traffic is linked to attacks, and supply-chain liability where SDKs were embedded without appropriate vetting.

Background

- CVE-2024-36401 entered CISA KEV in July 2024 amid active exploitation; GeoServer issued multiple patch trains, plus a high-severity XXE (CVE-2025-30220) fix this June.

- PolarEdge was first documented by Sekoia (Feb. 2025) and later by Censys (Aug. 2025), who framed it as an ORB-like relay for operational traffic, not mass scanning or coin mining.

- Gayfemboy emerged publicly in 2024; Fortinet’s Aug. 22, 2025 analysis shows new exploits, architectures and anti-analysis techniques.

What’s Next

Expect more quiet monetization (bandwidth resale/SDK abuse) and relay-grade botnets that prioritize stealth over volume. Immediate priorities: patch GeoServer, inventory and segment edge gear, and add detections for ORB-style egress and odd-port TLS. Threat intel sharing between ISPs, cloud providers and enterprises will be key to disrupting these low-noise campaigns.

Sources: Unit 42 (Palo Alto Networks) – GeoServer CVE-2024-36401 exploitation and SDK monetization; NVD/GeoServer project advisories; The Hacker News (Aug. 23, 2025) overview on GeoServer, PolarEdge, and Gayfemboy.

Censys & ISMG – PolarEdge ORB scale (~40k devices), edge device exploitation, and ORB behavior; Sekoia early reporting (Feb. 2025); Fortinet FortiGuard Labs (Aug. 22, 2025) on Mirai “Gayfemboy” exploits, variants, and anti-analysis features.

Additional context from CVE records (2024–2025), CISA KEV entries, and prior research linking Redis cryptojacking and TLS backdoors in PolarEdge campaigns.

data breaches

Cloudflare Outage Disrupts Global Internet — Company Restores Services After Major Traffic Spike

November 18, 2025 — MAG212NEWS

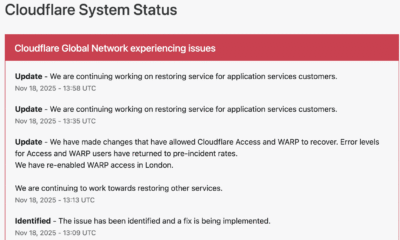

A significant outage at Cloudflare, one of the world’s leading internet infrastructure providers, caused widespread disruptions across major websites and online services on Tuesday. The incident, which began mid-morning GMT, temporarily affected access to platforms including ChatGPT, X (formerly Twitter), and numerous business, government, and educational services that rely on Cloudflare’s network.

According to Cloudflare, the outage was triggered by a sudden spike in “unusual traffic” flowing into one of its core services. The surge caused internal components to return 500-series error messages, leaving users unable to access services across regions in Europe, the Middle East, Asia, and North America.

Impact Across the Web

Because Cloudflare provides DNS, CDN, DDoS mitigation, and security services for millions of domains — powering an estimated 20% of global web traffic — the outage had swift and wide-reaching effects.

Users reported:

- Website loading failures

- “Internal Server Error” and “Bad Gateway” messages

- Slowdowns on major social platforms

- Inaccessibility of online tools, APIs, and third-party authentication services

The outage also briefly disrupted Cloudflare’s own customer-support portal, highlighting the interconnected nature of the company’s service ecosystem.

Cloudflare’s Response and Restoration

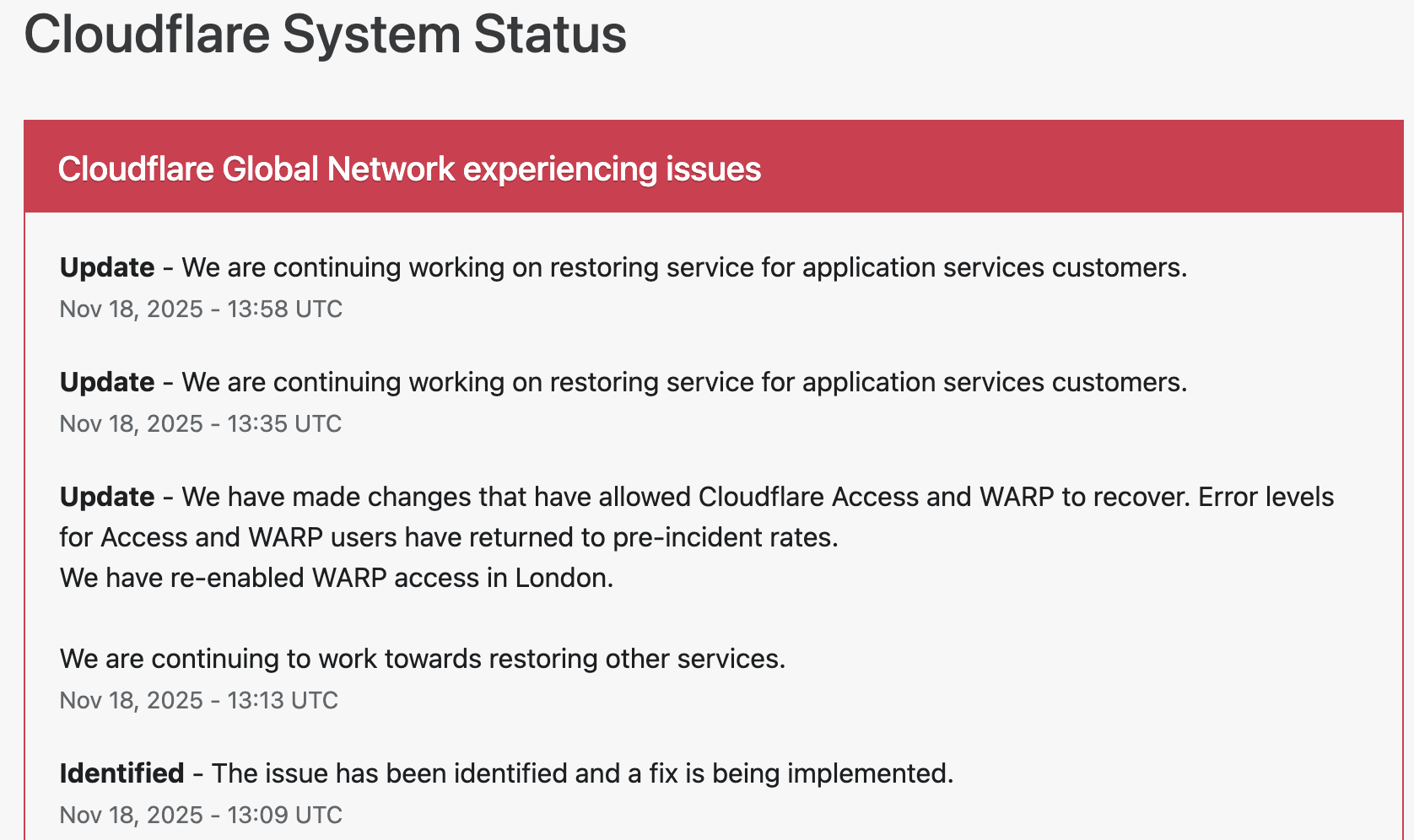

Cloudflare responded within minutes, publishing updates on its official status page and confirming that engineering teams were investigating the anomaly.

The company took the following steps to restore operations:

1. Rapid Detection and Acknowledgement

Cloudflare engineers identified elevated error rates tied to an internal service degradation. Public communications were issued to confirm the outage and reassure customers.

2. Isolating the Affected Systems

To contain the disruption, Cloudflare temporarily disabled or modified specific services in impacted regions. Notably, the company deactivated its WARP secure-connection service for users in London to stabilize network behavior while the fix was deployed.

3. Implementing Targeted Fixes

Technical teams rolled out configuration changes to Cloudflare Access and WARP, which successfully reduced error rates and restored normal traffic flow. Services were gradually re-enabled once systems were verified stable.

4. Ongoing Root-Cause Investigation

While the unusual-traffic spike remains the confirmed trigger, Cloudflare stated that a full internal analysis is underway to determine the exact source and prevent a recurrence.

By early afternoon UTC, Cloudflare confirmed that systems had returned to pre-incident performance levels, and affected services worldwide began functioning normally.

Why This Matters

Tuesday’s outage underscores a critical truth about modern internet architecture: a handful of infrastructure companies underpin a massive portion of global online activity. When one of them experiences instability — even briefly — the ripple effects are immediate and worldwide.

For businesses, schools, governments, and content creators, the incident is a reminder of the importance of:

- Redundant DNS/CDN providers

- Disaster-recovery and failover plans

- Clear communication protocols during service outages

- Vendor-dependency risk assessments

Cloudflare emphasized that no evidence currently points to a cyberattack, though the nature of the traffic spike remains under investigation.

Looking Ahead

As Cloudflare completes its post-incident review, the company is expected to provide a detailed breakdown of the technical root cause and outline steps to harden its infrastructure. Given Cloudflare’s central role in global internet stability, analysts say the findings will be watched closely by governments, cybersecurity professionals, and enterprise clients.

For now, services are restored — but the outage serves as a powerful reminder of how interconnected and vulnerable the global web can be.

data breaches

Cloudflare Outage Analysis: Systemic Failure in Edge Challenge Mechanism Halts Global Traffic

SAN FRANCISCO, CA — A widespread disruption across major internet services, including AI platform ChatGPT and social media giant X (formerly Twitter), has drawn critical attention to the stability of core internet infrastructure. The cause traces back to a major service degradation at Cloudflare, the dominant content delivery network (CDN) and DDoS mitigation provider. Users attempting to access affected sites were met with an opaque, yet telling, error message: “Please unblock challenges.cloudflare.com to proceed.”

This incident was not a simple server crash but a systemic failure within the crucial Web Application Firewall (WAF) and bot management pipeline, resulting in a cascade of HTTP 5xx errors that effectively severed client-server connections for legitimate users.

The Mechanism of Failure: challenges.cloudflare.com

The error message observed globally points directly to a malfunction in Cloudflare’s automated challenge system. The subdomain challenges.cloudflare.com is central to the company’s security and bot defense strategy, acting as an intermediate validation step for traffic suspected of being malicious (bots, scrapers, or DDoS attacks).

This validation typically involves:

- Browser Integrity Check (BIC): A non-invasive test ensuring the client browser is legitimate.

- Managed Challenge: A dynamic, non-interactive proof-of-work check.

- Interactive Challenge (CAPTCHA): A final, user-facing verification mechanism.

In a healthy system, a user passing through Cloudflare’s edge network is either immediately granted access or temporarily routed to this challenge page for verification.

During the outage, however, the Challenge Logic itself appears to have failed at the edge of Cloudflare’s network. When the system was invoked (likely due to high load or a misconfiguration), the expected security response—a functional challenge page—returned an internal server error (a 500-level status code). This meant:

- The Request Loop: Legitimate traffic was correctly flagged for a challenge, but the server hosting the challenge mechanism failed to process or render the page correctly.

- The

HTTP 500Cascade: Instead of displaying the challenge, the Cloudflare edge server returned a “500 Internal Server Error” to the client, sometimes obfuscated by the text prompt to “unblock” the challenges domain. This effectively created a dead end, blocking authenticated users from proceeding to the origin server (e.g., OpenAI’s backend for ChatGPT).

Technical Impact on Global Services

The fallout underscored the concentration risk inherent in modern web architecture. As a reverse proxy, Cloudflare sits between the end-user and the origin server for a vast percentage of the internet.

For services like ChatGPT, which rely heavily on fast, secure, and authenticated API calls and constant data exchange, the WAF failure introduced severe latency and outright connection refusal. A failure in Cloudflare’s global network meant that fundamental features such as DNS resolution, TLS termination, and request routing were compromised, leading to:

- API Timeouts: Applications utilizing Cloudflare’s API for configuration or deployment experienced critical failures.

- Widespread Service Degradation: The systemic 5xx errors at the L7 (Application Layer) caused services to appear “down,” even if the underlying compute resources and databases of the origin servers remained fully operational.

Cloudflare’s official status updates confirmed they were investigating an issue impacting “multiple customers: Widespread 500 errors, Cloudflare Dashboard and API also failing.” While the exact trigger was later traced to an internal platform issue (in some historical Cloudflare incidents, this has been a BGP routing error or a misconfigured firewall rule pushed globally), the user-facing symptom highlighted the fragility of relying on a single third-party for security and content delivery on a global scale.

Mitigation and the Single Point of Failure

While Cloudflare teams worked to roll back configuration changes and isolate the fault domain, the incident renews discussion on the “single point of failure” doctrine. When a critical intermediary layer—responsible for security, routing, and caching—experiences a core logic failure, the entire digital economy resting on it is exposed.

Engineers and site reliability teams are now expected to further scrutinize multi-CDN and multi-cloud strategies, ensuring that critical application traffic paths are not entirely dependent on a single third-party’s edge infrastructure, a practice often challenging due to cost and operational complexity. The “unblock challenges” error serves as a stark reminder of the technical chasm between a user’s browser and the complex, interconnected security apparatus that underpins the modern web.

data breaches

Manufacturing Software at Risk from CVE-2025-5086 Exploit

Dassault Systèmes patches severe vulnerability in Apriso manufacturing software that could let attackers bypass authentication and compromise factories worldwide.

A newly disclosed flaw, tracked as CVE-2025-5086, poses a major security risk to manufacturers using Dassault Systèmes’ DELMIA Apriso platform. The bug could allow unauthenticated attackers to seize control of production environments, prompting urgent patching from the vendor and warnings from cybersecurity experts.

A critical vulnerability in DELMIA Apriso, a manufacturing execution system used by global industries, could let hackers bypass authentication and gain full access to sensitive production data, according to a security advisory published this week.

Dassault Systèmes confirmed the flaw, designated CVE-2025-5086, affects multiple versions of Apriso and scored 9.8 on the CVSS scale, placing it in the “critical” category. Researchers said the issue stems from improper authentication handling that allows remote attackers to execute privileged actions without valid credentials.

The company has released security updates and urged immediate deployment, warning that unpatched systems could become prime targets for industrial espionage or sabotage. The flaw is particularly alarming because Apriso integrates with enterprise resource planning (ERP), supply chain, and industrial control systems, giving attackers a potential foothold in critical infrastructure.

- “This is the kind of vulnerability that keeps CISOs awake at night,” said Maria Lopez, industrial cybersecurity analyst at Kaspersky ICS CERT. “If exploited, it could shut down production lines or manipulate output, creating enormous financial and safety risks.”

- “Manufacturing software has historically lagged behind IT security practices, making these flaws highly attractive to threat actors,” noted James Patel, senior researcher at SANS Institute.

- El Mostafa Ouchen, cybersecurity author, told MAG212News: “This case shows why manufacturing execution systems must adopt zero-trust principles. Attackers know that compromising production software can ripple across supply chains and economies.”

- “We are actively working with customers and partners to ensure systems are secured,” Dassault Systèmes said in a statement. “Patches and mitigations have been released, and we strongly recommend immediate updates.”

Technical Analysis

The flaw resides in Apriso’s authentication module. Improper input validation in login requests allows attackers to bypass session verification, enabling arbitrary code execution with administrative privileges. Successful exploitation could:

- Access or modify production databases.

- Inject malicious instructions into factory automation workflows.

- Escalate attacks into connected ERP and PLM systems.

Mitigations include applying vendor patches, segmenting Apriso servers from external networks, enforcing MFA on supporting infrastructure, and monitoring for abnormal authentication attempts.

Impact & Response

Organizations in automotive, aerospace, and logistics sectors are particularly exposed. Exploited at scale, the vulnerability could cause production delays, supply chain disruptions, and theft of intellectual property. Security teams are advised to scan their environments, apply updates, and coordinate incident response planning.

Background

This disclosure follows a string of high-severity flaws in industrial and operational technology (OT) software, including vulnerabilities in Siemens’ TIA Portal and Rockwell Automation controllers. Experts warn that adversaries—ranging from ransomware gangs to state-sponsored groups—are increasingly focusing on OT targets due to their high-value disruption potential.

Conclusion

The CVE-2025-5086 flaw underscores the urgency for manufacturers to prioritize cybersecurity in factory software. As digital transformation accelerates, securing industrial platforms like Apriso will be critical to ensuring business continuity and protecting global supply chains.